April 21, 2026

Claude · Bigdata.com · MCP · Private content API · Skills/5 minutes read

Every research desk eventually hits the same wall: your best signals live in private email, connectors, and uploads, while the numbers that justify a thesis live in market data. Copy-pasting between inboxes and terminals does not scale. Neither does “ask the model” without a repeatable way to discover what you actually have, ground answers in both corpora, and leave a memory for the next run.

This post walks through a workflow we implemented end to end: private newsletter analysis plus public and tearsheet enrichment, using your files as a first-class source.

The problem: two silos, one question

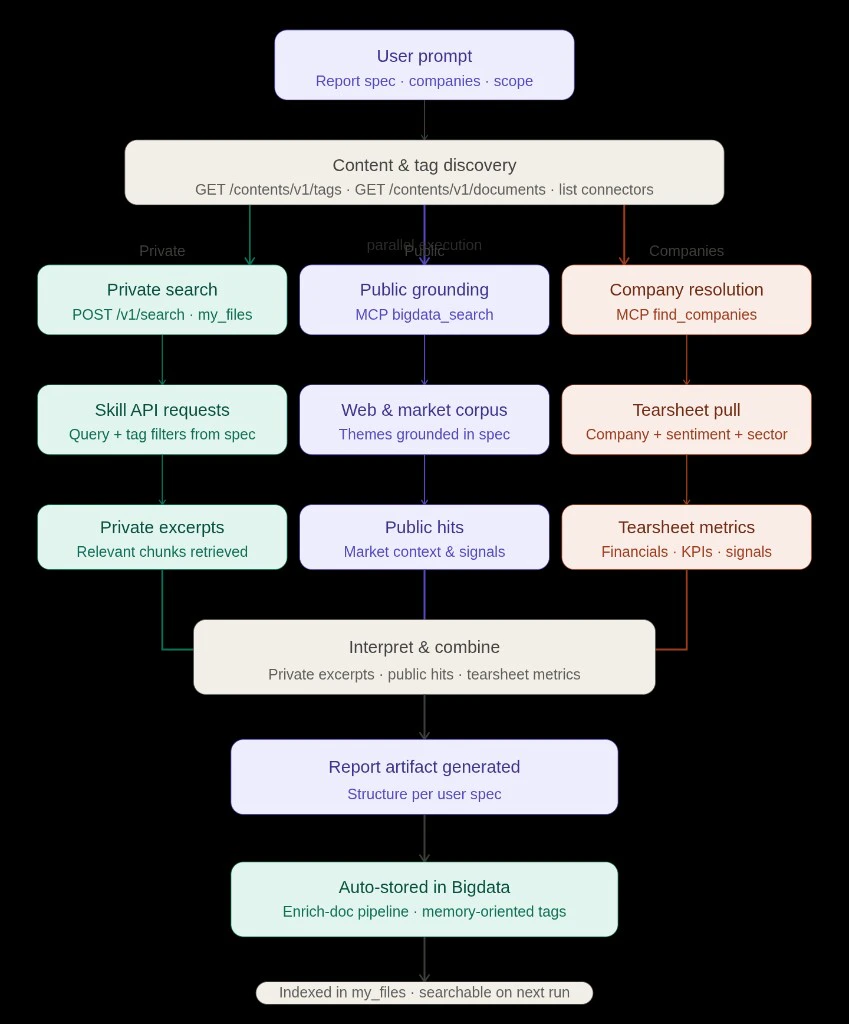

Analysts and operators routinely need questions that span private material and market reality at once, weighing what their own content imply against consensus, prices, and public news flow or turning their research documents into something they can revisit with ease instead of a static file on disk. Traditional tooling still splits private retrieval and market intelligence. The friction is not curiosity; it is workflow: discovery, parallel retrieval, synthesis, and persistence.The approach: one prompt, three parallel lanes, one memory loop

We combined four ideas:- Bigdata.com MCP:

bigdata_search,find_companies, company and sentiment tearsheets, and related tools for public grounding and structured company metrics (Bigdata MCP documentation describes the broader Claude + MCP + skills stack for institutional-style outputs). - Bigdata private content API: Connectors, tags, documents, and additional search requests with private content specific filters, so the agent searches in your personal available data, not a vague “upload folder”.

- A Claude skill (“playbook”): Routes when to list tags, when to filter search by tag, when to call

find_companiesbefore tearsheets, and how to upload artifacts back through enrich-document. - Automatic tagging from email provenance: So “only mail from this sender” becomes a stable filter instead of brittle full-text guesses.

The prompt

The run was driven by this exact user prompt:Discovery: inventory before retrieval

Before any heavy retrieval, the agent can use the private content APIs to explore what is actually indexed: GET/contents/v1/tags, GET /contents/v1/documents, and connector listings when needed. Those calls support the same questions a careful analyst would ask up front: What can I filter on?, What content do I have access to?, Roughly what volume sits behind each tag or connector? So the next step is grounded in real names and counts instead of guesswork. Once that inventory is clear, later search filters such as query.filters.tag.any_of can use real tag names from the platform, which keeps the newsletter or sender scope in the prompt aligned with identifiers that actually exist in the index.

Private lane (teal): skill-shaped API calls

Private grounding runs through the Search API with category my_files: Claude issuesPOST /v1/search with query.text shaped from the report spec, and narrows results with query.filters.tag.any_of when tags are known, including sender-level tags produced by automatic EML metadata tagging on ingest, so provenance becomes a filter instead of a brittle full-text workaround. For this newsletter exercise, that meant tags such as from:dan@tldrnewsletter.com to stay on the TLDR slice, multiple thematic searches under that constraint to maximize recall and then ranking recurring organizations from the retrieved set (OpenAI, Anthropic, Alphabet, Apple, Meta, Nvidia, fintech names, and others).

Public lane (purple): MCP bigdata_search

The same themes that drive private query.text are mirrored into bigdata_search so the narrative is anchored in web and market corpus context: quotes, catalysts, and sector chatter that do not exist in your private content. Those public pulls ran in parallel with the private searches so the write-up could contrast newsletter emphasis with what the wider market corpus was highlighting.

Companies lane (coral): resolve, then tearsheet

For each named issuer,find_companies returns the RavenPack entity id and public/private type. That unlocks bigdata_company_tearsheet and bigdata_sentiment_tearsheet (public names) so tables can carry EPS surprises, multiples, consensus, KPIs, and media sentiment scores. After the organization shortlist was stable, Claude pulled tearsheets for key public filers and a private-company profile where data was available, so the HTML tables were filled from tools, not hand-typed placeholders.

Deliverable: HTML, workflow notes, and the repeatable contract

With retrieval and enrichment in place, Claude wrote the self-contained HTML report. See the generated artifact below:Why automatic EML tagging matters

Email connectors are noisy. Automatic EML metadata tagging turns “who sent this?” into a first-class filter in the Search API. The user’s hint “ground only mail from this sender” becomes a tag constraint, not a fragile regex over bodies. That is how a newsletter connector stays usable at scale: each issue inherits predictable and discoverable tags.Skills as the glue in Claude

We ran this in Claude with the Bigdata MCP connector enabled and a packaged skill that encodes the playbook, similar idea as the ecosystem’s Financial Research Analyst skill. The pattern is always structured routing + tool contracts + provenance in the answer. Our skill encodes:- Inventory-first when tags or connectors are unknown.

find_companiesbefore tearsheets to avoid wrong-entity financials.- Enrich upload when the user wants the artifact to join

my_fileswith explicit tags for the next “memory workload.”

What comes next

This is only the beginning. We are actively expanding the library of skills and workflows available through Bigdata MCP. If your team has a research workflow that could benefit from this approach, email us at support@bigdata.com. We would love to hear what you are building or what we can build together.Relevant links:

- Professional-Grade Financial Reports with Claude, Bigdata MCP, and Skills: Claude, MCP, and skills stack for institutional-style outputs.

- Financial Research Analyst skill: packaged playbook for research and writing with Bigdata tools.

- Claude MCP integration: enabling the Bigdata connector in Claude.

- Content API introduction: connectors, uploads, and private document operations.

- Upload your own content: tags, listing documents, and using tags in search.

- List tags: GET

/contents/v1/tags. - List documents: GET

/contents/v1/documents. - Search documents: POST

/v1/search, includingmy_filesandquery.filters.tag. - Search in uploaded files: end-to-end upload and search over private content.

VP

Víctor Pimentel Naranjo

Senior Product Manager, Team Lead